OpenAI Tightened ChatGPT Limits. What It Means for Plus, Pro, and Heavy Use

A detailed look at how OpenAI changed paid ChatGPT plan limits in 2026: what became stricter, why the company moved to a new access model, how it ties into Codex, fallback models, credits, and compute pressure, and what users should expect next.

start here

If you only need the short version, here it is: OpenAI no longer behaves as if heavy ChatGPT usage can stay hidden inside one fixed subscription forever. The company is openly rebuilding access so that limits still smooth demand, while everything beyond a “normal” usage pattern moves toward a higher tier, credits, or a lighter fallback model.

Beyond rate limits, OpenAI says bluntly that the old strategy of simply raising limits stopped working because demand grew faster than expected. [5]At the official messaging level, OpenAI does not say “we nerfed Plus.” It says “we're updating Plus and Pro to better support growing Codex usage.” That is how the update was framed in the official X post that the community then circulated. [10]

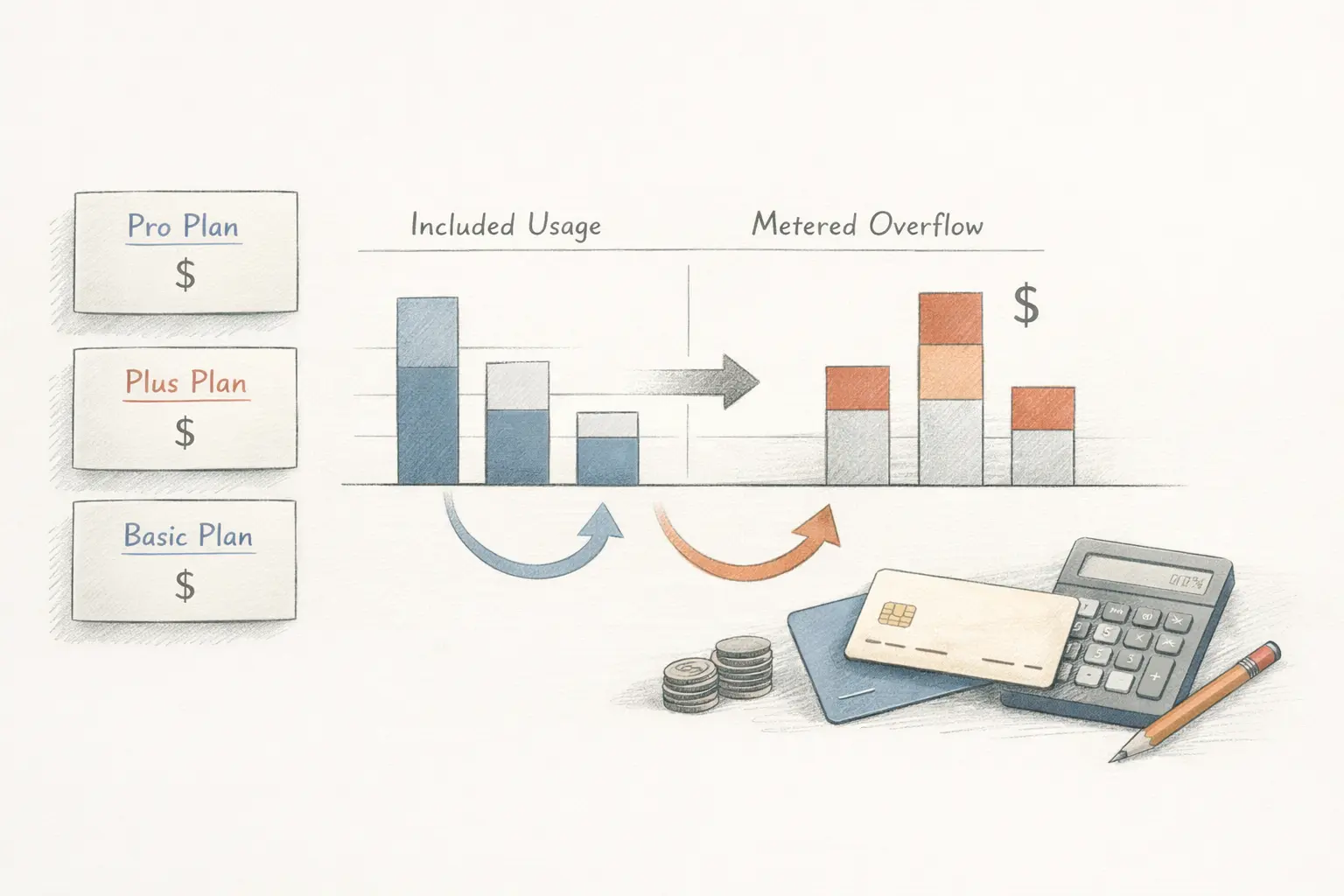

But when you break the changes down, the picture is straightforward. First, OpenAI inserted a new $100 Pro plan between Plus and the older $200 Pro tier. Second, for heavy usage it is moving away from vague promises of “higher limits” and toward a much clearer hierarchy: included usage, fallback, credits, or a more expensive plan. [1][3][4]

Codex makes that shift especially visible. In February, OpenAI still wrote about generous access for a limited period and then a later move to rate-limited access and flexible pricing, meaning paid continuation on top. [7] That structure is now live in the product. [4][5]

For the user, that is what a nerf feels like, even if OpenAI uses different wording. The lived effect is simple: what used to feel like a broader included allowance now pushes you toward fallback, credits, or an upsell sooner if your sessions are long and expensive.

If you only look at the user reaction, it is easy to reduce this to “the company wanted to sell less for the same money.” The official sources show a more complete picture. Demand, compute pressure, agentic usage, and monetization all started pushing in the same direction.

1. Demand grew faster than expected

In Beyond rate limits: scaling access to Codex and Sora, OpenAI says directly that both Codex and Sora grew very quickly over the last year and that users routinely hit rate limits once they started getting real value from the tools. [5]

2. Simply raising caps no longer works

In the same post, OpenAI says that simply increasing limits across the board weakens demand smoothing and fairness controls and makes the system run into a capacity ceiling faster. That sentence explains the logic of the tighter boundaries better than any marketing spin does. [5]

3. OpenAI has already shown it can hit serving limits

In the February 10 write-up, OpenAI described elevated error rates for paid GPT-5.2 plans as a temporary serving capacity shortfall. That is not proof that every new limit came from one incident, but it is a strong official signal that serving capacity is a real operational constraint. [9]

4. The company is moving toward a hybrid access model

On paper, OpenAI can describe this as a better access model. In daily work, the user experience is much more concrete than that.

| Comparison point | What the official source says | What it means in practice |

|---|---|---|

| GPT-5.4 Thinking | Plus and Business users get up to 3,000 messages per week, after which the model is no longer manually available. [2] | Heavy reasoning sessions now feel much more like a quota-controlled resource, not like a premium mode you can keep pushing indefinitely. |

| Fallback after the cap | After GPT-5.4 Thinking limits are hit, paid users fall back to GPT-5.4 mini. [1] | Work does not stop completely, but the quality and feeling of “included flagship access” are no longer guaranteed in long sessions. |

| Codex usage | After included limits are used up, users can buy credits instead of being forced straight into a plan upgrade. [4] | The subscription increasingly looks like a base package with paid extension on top, not like a sealed flat price. |

| Pro tiers | Pro $100 gives 5x usage versus Plus, and temporarily 10x for Codex. Pro $200 stays as the highest usage tier. [1][3] | Users are being segmented more deliberately: Plus for lighter use, Pro for real daily work, and the upper tier for expensive, parallel, sustained workflows. |

This is the key part of the picture. If OpenAI were only changing limits around ordinary chat, it would look like another mild pricing recalibration. But almost everything here revolves around Codex, meaning agentic, tool-using, more expensive usage patterns. [1][5][7]

In the help article about using Codex with a ChatGPT plan, OpenAI explains that usage depends not just on message count, but on codebase size, task complexity, session length, and whether the task runs locally or in the cloud. [8] That matters because this kind of workload fits very badly into the old subscription logic of “one plan, one fuzzy allowance.”

At that point the new model stops pretending to be hidden. Users spend their included usage first and then can buy credits directly in Codex. [4] That looks like OpenAI trying to both protect service performance and move heavy engineering usage closer to an API-style economic model without kicking the user out of the ChatGPT subscription surface.

It is important not to confuse fact with mood. The official sources tell you what OpenAI changed. Community discussions tell you how those changes actually feel in real use.

When you put all the official texts together, this does not look like a temporary anomaly. It looks like a clear product direction.

Summary

The most likely future is not a return to the older generosity. A more realistic expectation is better plan segmentation, more fallback behavior, and a tighter merger between subscription access and metered overflow.

OpenAI did not just shave a few numbers in a pricing table. It is rewriting the philosophy of access to expensive AI scenarios inside ChatGPT. For light usage, the subscription still behaves like a familiar product. For heavy usage, it increasingly behaves like a base package that sits in front of fallback, credits, or a plan upgrade.

For Plus users, the main takeaway is simple: if you use ChatGPT as a serious daily work tool, especially through Codex or reasoning-heavy models, the old feeling that “it covers almost everything” is going away. For Pro users, the takeaway is slightly different: OpenAI is trying to turn the plan into a more meaningful rung in the ladder, not just a more expensive Plus badge.

For the market as a whole, the signal is broader. Generative products that once felt like almost boundless subscriptions are moving toward mixed access models. If that trend holds, users should expect less abstract generosity and more explicit boundaries, fallback logic, and pay-as-you-go overflow on top.

Officially, OpenAI talks about updated Plus and Pro plans, fallback models, credits, and a new plan structure. But from the user's point of view, it clearly feels like stricter boundaries for heavy scenarios, especially around Codex and reasoning-heavy use.

Because OpenAI says directly that simply raising caps hurts demand smoothing and fairness controls and makes the service hit capacity limits faster. So for the company this is not just about monetization. It is also about operational stability. [5][9]

In practice there are three options: accept lighter fallback models, buy credits for Codex or Sora, or move to a higher tier. That is now the official product logic. [3][4][8]

Based on the official texts, that looks unlikely. A more realistic expectation is finer plan segmentation, more fallback behavior, and a tighter merge between ChatGPT subscriptions and a hybrid subscription-plus-credits model.

Related Articles

AI SEO / GEO in 2026: Your Next Customers Aren’t Humans — They’re Agents

Search is shifting from clicks to answers. Bots and AI agents crawl, cite, recommend, and increasingly buy. Learn what AI SEO / GEO means, why classic SEO is no longer enough, and how PAS7 Studio helps brands win visibility in the agentic web.

The most powerful Apple chip yet? M5 Pro and M5 Max are breaking records

A data-backed March 2026 analysis of Apple M5 Pro and M5 Max. We break down why these chips can credibly be called Apple's most powerful pro laptop silicon, how they compare with M4 Pro, M4 Max, M1 Pro, M1 Max, and how they stack up against Intel and AMD laptop rivals.

Artemis II and the Code That Carries Humans to the Moon

This article unpacks NASA's Artemis II mission, launched on April 1, 2026, and explains what it really says about modern engineering: flight software, backup logic, simulation, telemetry, human control, and the careful role AI can play in space systems.

Automatic Tagging & Search for Saved Links

Integrate with GDrive/S3/Notion for automatic tagging and fast search via search APIs

Professional development for your business

We create modern web solutions and bots for businesses. Learn how we can help you achieve your goals.