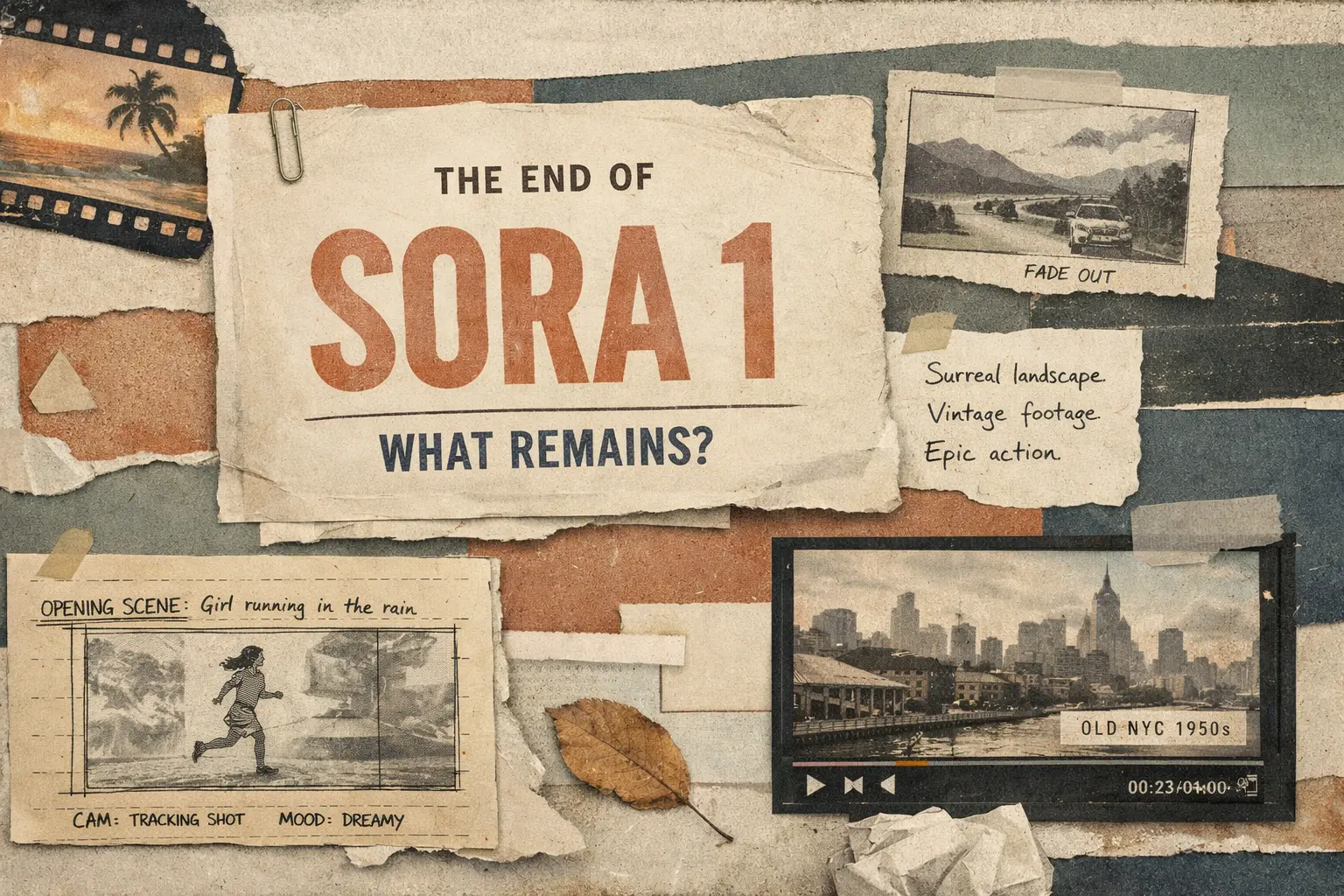

Sora 1 Ends. What Did It Leave Behind?

A clear look at Sora 1's sunset in 2026: what OpenAI actually shut down, how Sora evolved from the 2024 research preview to Sora 2, what it achieved, why the legacy version was retired, and why critics never fully stopped worrying about it.

main point

If you take only one idea from this article, let it be this: the end of Sora 1 is not the end of Sora. OpenAI removed the older layer because it wanted one newer product, one newer stack, and one clearer story for the user.

Sora 1 stopped being available in the United States on March 13, 2026, while Sora 2 became the default Sora experience. [1]older models and infrastructure, and the company wanted a single, updated experience powered by Sora 2. [1]The first thing to clarify is the headline itself. If we stay close to the official wording, OpenAI did not shut down Sora as a whole. The company shut down Sora 1 in the United States on March 13, 2026 and moved everyone to Sora 2. [1]

The help center says this fairly directly. Sora 1 is no longer available in the US, Sora now opens in Sora 2 by default, and image generation inside Sora is no longer available after that change. [1] That last detail shows how tightly the first product was tied to the older stack.

The official explanation is also low drama: Sora 1 relied on older models and infrastructure, and moving to one product reduces complexity while giving OpenAI room to keep developing Sora 2 on both web and mobile. [1] This reads more like product consolidation than a loud funeral for a failed tool.

Sora moved very fast. In a little over two years it went from a research preview that made the market stop and look, to a consumer app, and then to a legacy layer OpenAI was ready to remove.

Sora arrives as a research shock

In its research paper, OpenAI presented Sora as a model capable of generating up to a minute of high-quality video. But the bigger story was not just the length. It showed object permanence, longer scene coherence, camera motion, looping, video extension, interpolation, and a noticeably higher sense of consistency than the market was used to seeing. [3]

Sora becomes a real product surface

By the time Sora reached broader access, OpenAI was already presenting it as more than a model demo. AP wrote both about the release and the early limits around depicting people. That was a clear signal that the risk profile here was already very different from a normal image tool. [6]

Sora 2 changes the center of gravity

OpenAI described Sora 2 as more physically accurate, more realistic, more controllable, and able to generate synchronized dialogue and sound effects. It also launched through a new Sora app rather than just a web experiment. [4]

The app starts growing real product features

The release notes show how quickly the product grew: storyboards, cameos and later characters, stitching, styles, Android support, longer videos, image-to-video with people under stricter rules, and video extensions. [5]

Sora 1 becomes the legacy branch

OpenAI sunsets Sora 1 in the US, moves users to Sora 2 by default, and removes image generation from Sora itself. That is the moment when the original web-era Sora stops being the main product story. [1]

Summary

Sora did not have one life. First it was a research story, then a product story. Now only the second one remains.

People often reduce Sora to 'that AI video tool that looked better than the others.' That is too shallow. What made Sora important is that it compressed several stages of the market's progress into one short stretch of time.

It made long video feel plausible

Back in 2024, the point was not just pretty clips. OpenAI was showing that large-scale video training could hold together minute-long scenes, different aspect ratios, looping, extension, and more coherent multi-shot outputs than the market was used to. [3]

It pushed world-model talk into the mainstream

It turned video generation into a product, not just a demo

It moved faster than public trust did

The easiest way to understand the sunset is a direct comparison between Sora 1 and Sora 2. The name stayed the same, but the product logic did not.

| Comparison point | Sora 1 / original web era | Sora 2 / current direction |

|---|---|---|

| Core identity | Legacy web experience tied to older video and image generation infrastructure. [1] | Single default Sora experience, centered on newer models and a broader app-plus-web product. [1][4] |

| Flagship claim | Proof that high-fidelity, longer AI video could work at all. [3] | A more physically accurate, controllable model with synchronized dialogue and sound effects. [4] |

| Creative workflow | Prompting, image/video conditioning, looping, extension, interpolation, image generation. [1][3] | Storyboards, styles, characters, stitching, editor, extensions, image-to-video with people under stricter rules. [5] |

| Surface | Web-first, with the energy of a research preview and the status of a legacy branch. [1] | App-first product with feed logic, social reuse, and rollout to mobile platforms. [4][5] |

| After March 13, 2026 in the US | Removed. Users can no longer switch back. [1] | Default Sora experience. [1] |

The official explanation is short. OpenAI says Sora 1 depended on older models and infrastructure, and that moving to one Sora experience reduces complexity while letting the team calmly keep developing Sora 2 on web and mobile. [1] For a product decision, that explanation already stands on its own.

But there is another layer too. By late 2025 and early 2026, Sora was no longer just a place for isolated generations. It had become an app with feed behavior, characters, styles, stitching, longer clips, mobile rollout, and a very different monetization logic. [4][5] Keeping a separate Sora 1 legacy branch next to that would have meant maintaining older image-and-video pathways while the main team was clearly building a different product.

There is also a trust and governance angle. One active product is easier to moderate, label, watermark, and update than two parallel surfaces with different assumptions and capabilities. OpenAI does not say that directly in the FAQ, but the overall product direction points there very clearly. [1][4][10]

The easiest way to write a bad retrospective about Sora is to pretend it was either only a big success or only a reckless launch. In reality, both lines ran at the same time.

Deepfakes moved from abstract fear to consumer reality

TIME reported that Reality Defender was able to bypass Sora's anti-impersonation protections within the first 24 hours, and its CEO described the result as a plausible sense of security. [7] That is a serious criticism because Sora's realism was exactly what made it so culturally visible.

Public-interest groups saw a release-speed problem

AP reported that Public Citizen called the Sora 2 release a consistent and dangerous pattern of rushing products to market without enough guardrails. [8] You can disagree with the wording, but it captures a real public trust problem around OpenAI's release tempo.

OpenAI had to keep tightening safety while expanding features

Sora 1 leaves behind something bigger than one interface. It changed the baseline for what people expect from AI video. Before Sora, many clips still looked like short-lived experiments. After Sora, the conversation shifted toward realism, scene continuity, editing workflows, audio, social reuse, and trust at scale. [3][4][5]

That is why the end of Sora 1 is not a minor housekeeping note. It closes the first phase of OpenAI's video story. The research-preview phase is over. The app-and-platform phase is now the real one.

If you want the simplest human reading of the whole story, it is this: Sora 1 showed that the market had already changed. Sora 2 is the version OpenAI believes is fit for continued public development. And the speed with which the old layer disappeared tells you how quickly the product, the infrastructure, and the surrounding risk profile all moved at once.

No. Officially, OpenAI shut down `Sora 1` in the United States on March 13, 2026. `Sora 2` remains the default Sora experience there. [1]

According to OpenAI, Sora 1 relied on older models and infrastructure. The company moved the product to a single Sora 2 experience in order to reduce complexity and keep developing the newer stack on web and mobile. [1]

In the US, users lost access to the legacy Sora 1 experience, to older generations and social activity after the cutoff unless they exported their data in time, and image generation inside Sora disappeared as well. [1]

The first Sora mattered because it made longer and much more coherent AI video look plausible at a level the market had rarely seen before. OpenAI's 2024 paper highlighted minute-long scenes, object permanence, multi-shot consistency, looping, extension, and stronger stability across aspect ratios. [3]

OpenAI presents Sora 2 as a more physically accurate and more controllable model with synchronized dialogue and sound effects. On top of that, the product gained app-first features such as storyboards, characters, stitching, styles, an editor, and extensions. [4][5]

Because the same realism that made Sora impressive also intensified concerns around impersonation, consent, deepfakes, and copyright. External reporting from TIME, AP, and critics such as Public Citizen shows that these concerns remained central even as the product improved. [7][8]

This article is based on official OpenAI help and product pages, the original 2024 research paper, the Sora 2 launch materials from 2025, and outside reporting on safety, privacy, and public criticism around the product.

• 3. OpenAI Research: Video generation models as world simulators

• 6. AP News: OpenAI releases AI video generator Sora but limits how it depicts people

• 7. TIME: OpenAI's Sora Underscores the Growing Threat of Deepfakes

• 8. AP News: Public Citizen demands OpenAI withdraw Sora over deepfake dangers

• 10. OpenAI Help Center: Generating content with characters

Related Articles

AI SEO / GEO in 2026: Your Next Customers Aren’t Humans — They’re Agents

Search is shifting from clicks to answers. Bots and AI agents crawl, cite, recommend, and increasingly buy. Learn what AI SEO / GEO means, why classic SEO is no longer enough, and how PAS7 Studio helps brands win visibility in the agentic web.

The most powerful Apple chip yet? M5 Pro and M5 Max are breaking records

A data-backed March 2026 analysis of Apple M5 Pro and M5 Max. We break down why these chips can credibly be called Apple's most powerful pro laptop silicon, how they compare with M4 Pro, M4 Max, M1 Pro, M1 Max, and how they stack up against Intel and AMD laptop rivals.

Automatic Tagging & Search for Saved Links

Integrate with GDrive/S3/Notion for automatic tagging and fast search via search APIs

Bot Development & Automation Services

Professional Telegram bot development and business process automation: chatbots, AI assistants, CRM integrations, workflow automation.

Professional development for your business

We create modern web solutions and bots for businesses. Learn how we can help you achieve your goals.